Automatically Evading Classifiers

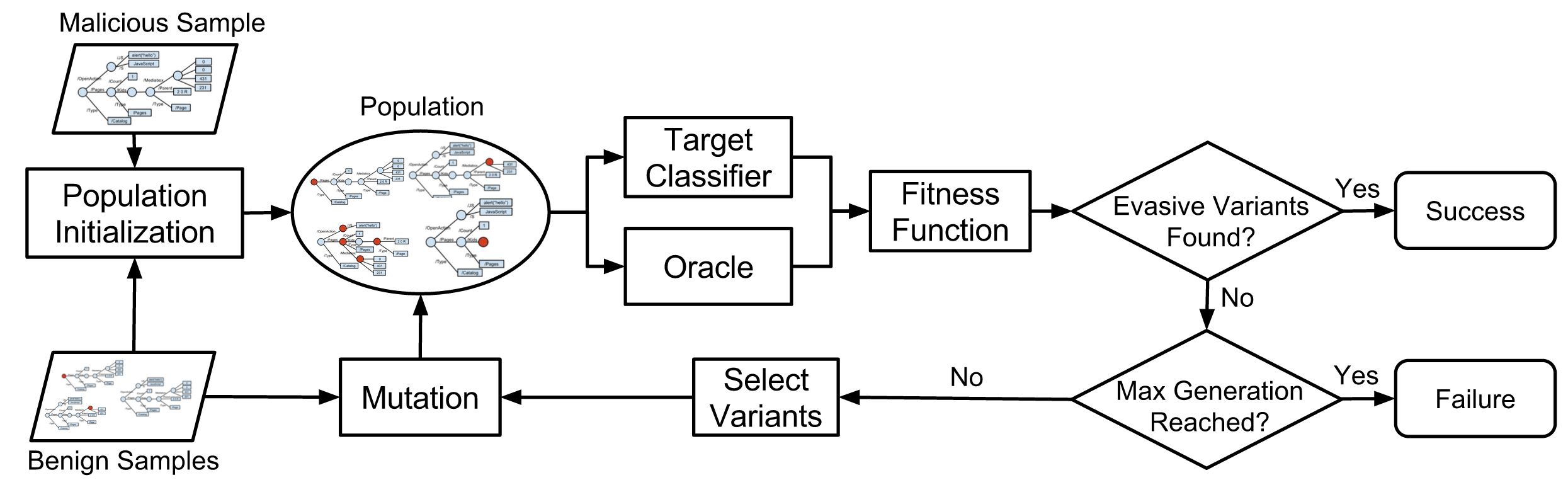

EvadeML is an evolutionary framework based on genetic programming for automatically finding variants that evade detection by machine learning-based malware classifiers.

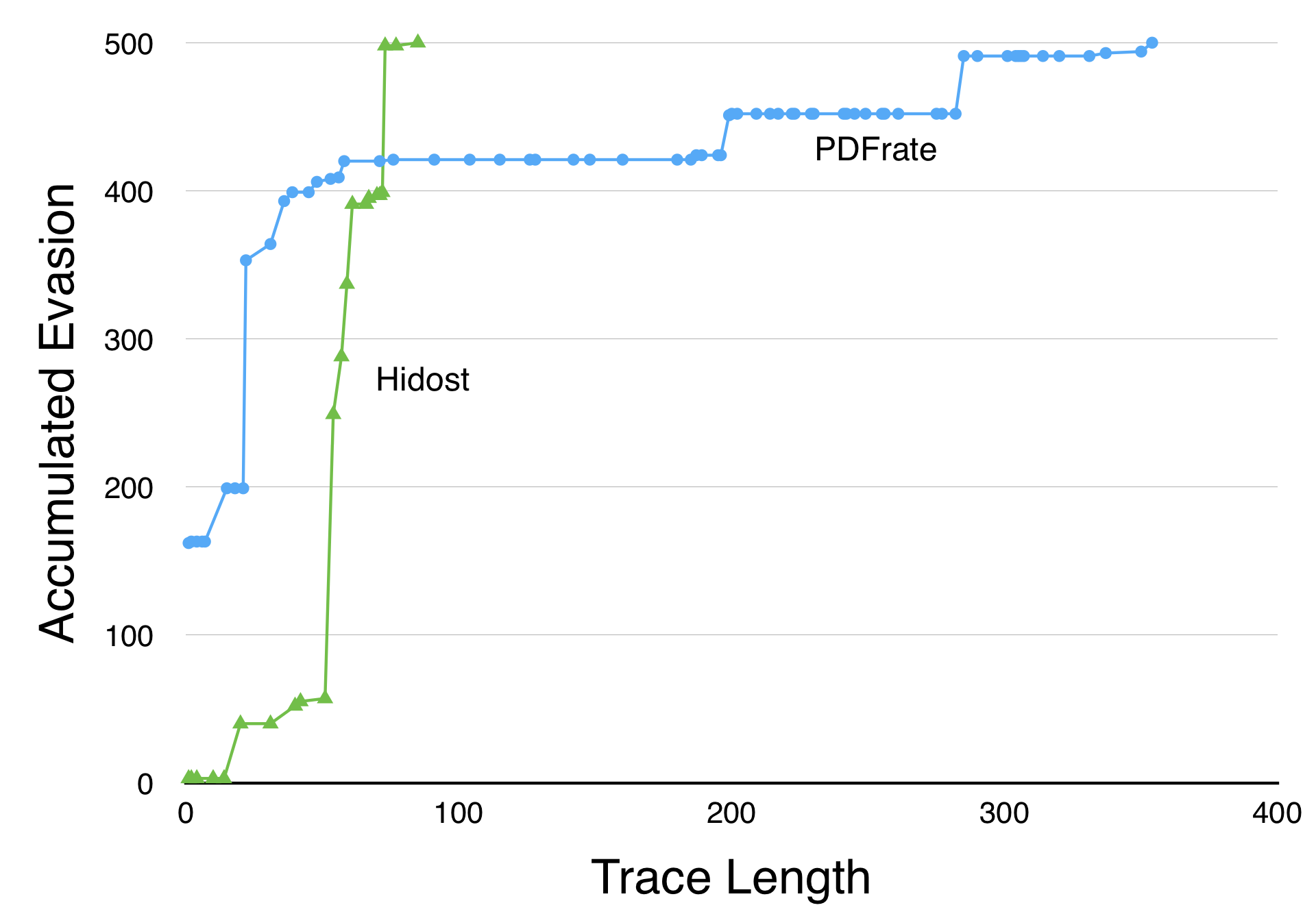

Machine learning is widely used to develop classifiers for security tasks. However, the robustness of these methods against motivated adversaries is uncertain. In this work, we propose a generic method to evaluate the robustness of classifiers under attack. The key idea is to stochastically manipulate a malicious sample to find a variant that preserves the malicious behavior but is classified as benign by the classifier. We present a general approach to search for evasive variants and report on results from experiments using our techniques against two PDF malware classifiers, PDFrate and Hidost.

Our method is able to automatically find evasive variants for both classifiers for all of the 500 malicious seeds in our study. Our results suggest a general method for evaluating classifiers used in security applications, and raise serious doubts about the effectiveness of classifiers based on superficial features in the presence of adversaries.

References

Paper

Weilin Xu, Yanjun Qi, and David Evans. Automatically Evading Classifiers A Case Study on PDF Malware Classifiers. Network and Distributed Systems Symposium 2016, 21-24 February 2016, San Diego, California. Full paper (15 pages): [PDF]

Talks

Classifiers Under Attack, David Evans’ talk at USENIX Enigma 2017 (1 February 2017)

Automatically Evading Classifiers, Weilin Xu’s talk at NDSS 2016 (24 February 2016)

More talks…

Source Code

https://github.com/uvasrg/EvadeML

Team

Weilin Xu (Lead PhD Student)

Anant Kharkar (Undergraduate Researcher)

Helen Simecek (Undergraduate Researcher)

Yanjun Qi (Faculty Co-Advisor)

David Evans (Faculty Co-Advisor)